ESP8266 Wi-Fi Analog Clock: Key Takeaways

- GitHub Stars: 131

- Languages: C++ 64.1%, C 35.9%

- License: MIT

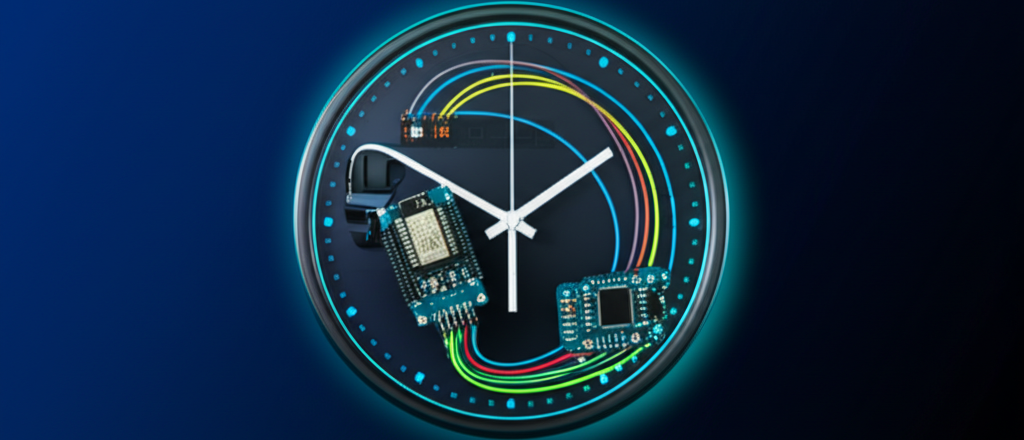

From a $4 Walmart Clock to an NTP Clock

ESP8266_WiFi_Analog_Clock is a project that converts an analog clock, sold for $3.88 at Walmart, into a Wi-Fi clock.[GitHub] It uses a WEMOS D1 Mini to receive the time from an NTP server. It automatically synchronizes every 15 minutes and handles daylight saving time as well.

The ESP8266 directly controls the Lavet stepping motor inside the clock. It compares the time 10 times per second and sends a pulse to advance the second hand if it’s lagging behind.[GitHub README] Since it can’t go backward, it waits for the actual time to catch up if it’s ahead.

Remembers the Time Even When Power is Off

The key design feature is the use of Microchip 47L04 EERAM.[GitHub] With EEPROM backup in the SRAM, it doesn’t lose the hand position even during a power outage. Upon power restoration, it immediately resumes synchronization from the saved position.

Initial setup is done through a web interface. When you first power it on, you simply tell it the hand position via the web. After that, the EERAM continuously tracks the position. Status monitoring and SVG visualization are also supported on the web.

If You Want to Build It

All you need is a WEMOS D1 Mini, a 47L04 EERAM, and a cheap analog clock. Solder it on a perfboard and you’re done. It’s based on an Arduino sketch, so it’s easy to modify, and it’s MIT licensed, so you can use it freely.

Frequently Asked Questions (FAQ)

Q: How much does the entire build cost?

A: About $3.88 for the Walmart clock, $3-5 for the WEMOS D1 Mini, and about $2 for the 47L04 EERAM. The total cost of parts is roughly $10-15. You can reduce it further by recycling an existing analog clock. Soldering equipment is required separately.

Q: What happens if NTP synchronization fails?

A: Even if the NTP connection temporarily fails, the clock continues to operate. The ESP8266’s internal timer maintains the time and retries in the next cycle (15 minutes). If the internet is disconnected for a long time, errors may accumulate, but it will be corrected as soon as the connection is restored.

Q: Can I build it even if I have no programming experience?

A: You need to know basic soldering and how to use the Arduino IDE. The code is complete on GitHub, so you can just upload it as is. Basic knowledge of electronic circuits is helpful when assembling the hardware. The README is detailed, so hopefully, it will be helpful.

If you found this article useful, please subscribe to AI Digester.

References

- ESP8266_WiFi_Analog_Clock GitHub Repository – GitHub

- WEMOS D1 Mini Official Page – WEMOS

- Microchip 47L04 EERAM Product Page – Microchip